Merge pull request 'dev to main periodic update' (#71) from development into main

Reviewed-on: #71

This commit was merged in pull request #71.

This commit is contained in:

@@ -39,15 +39,15 @@ flag. Otherwise exits with code 0. Note that errors set as --warnings will alway

|

||||

### With Python

|

||||

|

||||

* Clone the repository

|

||||

* ```

|

||||

```

|

||||

git clone https://github.com/threefoldfoundation/website-link-checker

|

||||

```

|

||||

* Change directory

|

||||

* ```

|

||||

```

|

||||

cd website-link-checker

|

||||

```

|

||||

* Run the program

|

||||

* ```

|

||||

```

|

||||

python website-link-checker.py https://example.com -e 404 -w all

|

||||

```

|

||||

|

||||

|

||||

@@ -68,16 +68,16 @@ To do so, you simply need to clone the forked repository on your local computer

|

||||

The steps are the following:

|

||||

|

||||

* In the terminal, write the following line to clone the forked `info_grid` repository:

|

||||

* ```

|

||||

```

|

||||

git clone https://github.com/YOUR_GIT_ACCOUNT/info_grid

|

||||

```

|

||||

* make sure to write your own Github account in the URL

|

||||

* To deploy the mdbook locally, first go to the **info_grid** directory:

|

||||

* ```

|

||||

```

|

||||

cd info_grid

|

||||

```

|

||||

* Then write the following line. It will open the manual automatically.

|

||||

* ```

|

||||

```

|

||||

mdbook serve -o

|

||||

```

|

||||

* Note that, by default, the URL is the following, using port `3000`, `http://localhost:3000/`

|

||||

|

||||

@@ -1,6 +1,6 @@

|

||||

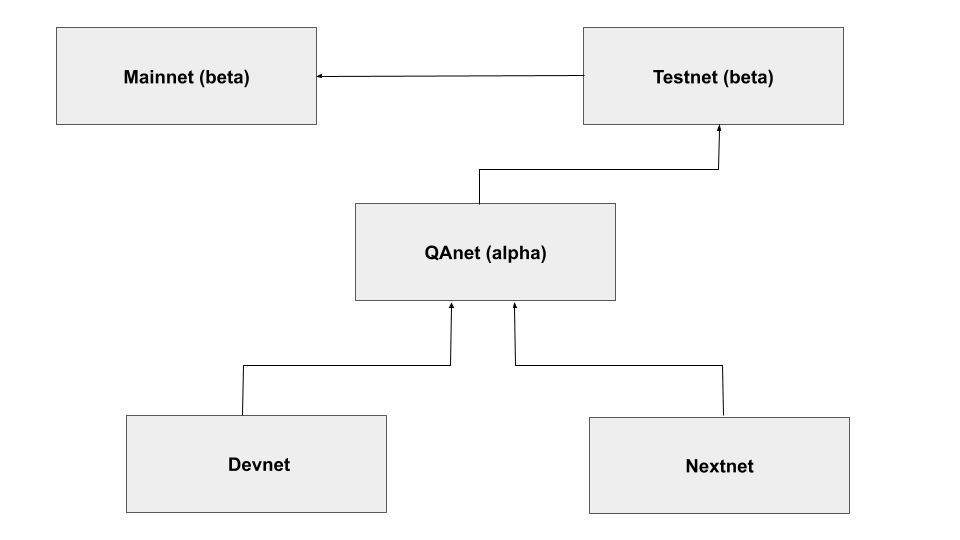

The development cycle is explained below:

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

BIN

collections/collaboration/img/dev_cycle.png

Normal file

BIN

collections/collaboration/img/dev_cycle.png

Normal file

Binary file not shown.

|

After Width: | Height: | Size: 24 KiB |

@@ -223,27 +223,27 @@ You now have access to the Docker Hub from your local computer. We will then pro

|

||||

* Make sure the Docker Daemon is running

|

||||

* Build the docker container

|

||||

* Template:

|

||||

* ```

|

||||

docker build -t <docker_username>/<docker_repo_name> .

|

||||

```

|

||||

```

|

||||

docker build -t <docker_username>/<docker_repo_name> .

|

||||

```

|

||||

* Example:

|

||||

* ```

|

||||

docker build -t username/debian12 .

|

||||

```

|

||||

```

|

||||

docker build -t username/debian12 .

|

||||

```

|

||||

* Push the docker container to the [Docker Hub](https://hub.docker.com/)

|

||||

* Template:

|

||||

* ```

|

||||

docker push <your_username>/<docker_repo_name>

|

||||

```

|

||||

```

|

||||

docker push <your_username>/<docker_repo_name>

|

||||

```

|

||||

* Example:

|

||||

* ```

|

||||

docker push username/debian12

|

||||

```

|

||||

```

|

||||

docker push username/debian12

|

||||

```

|

||||

* You should now see your docker image on the [Docker Hub](https://hub.docker.com/) when you go into the menu option `My Profile`.

|

||||

* Note that you can access this link quickly with the following template:

|

||||

* ```

|

||||

https://hub.docker.com/u/<account_name>

|

||||

```

|

||||

```

|

||||

https://hub.docker.com/u/<account_name>

|

||||

```

|

||||

|

||||

|

||||

|

||||

@@ -265,13 +265,13 @@ We will now convert the Docker image into a Zero-OS flist. This part is so easy

|

||||

* Under `Name`, you will see all your available flists.

|

||||

* Right-click on the flist you want and select `Copy Clean Link`. This URL will be used when deploying on the ThreeFold Playground. We show below the template and an example of what the flist URL looks like.

|

||||

* Template:

|

||||

* ```

|

||||

https://hub.grid.tf/<3BOT_name.3bot>/<docker_username>-<docker_image_name>-<tagname>.flist

|

||||

```

|

||||

```

|

||||

https://hub.grid.tf/<3BOT_name.3bot>/<docker_username>-<docker_image_name>-<tagname>.flist

|

||||

```

|

||||

* Example:

|

||||

* ```

|

||||

https://hub.grid.tf/idrnd.3bot/username-debian12-latest.flist

|

||||

```

|

||||

```

|

||||

https://hub.grid.tf/idrnd.3bot/username-debian12-latest.flist

|

||||

```

|

||||

|

||||

|

||||

|

||||

@@ -283,16 +283,14 @@ We will now convert the Docker image into a Zero-OS flist. This part is so easy

|

||||

* Choose your parameters (name, VM specs, etc.).

|

||||

* Under `flist`, paste the Debian flist from the TF Hub you copied previously.

|

||||

* Make sure the entrypoint is as follows:

|

||||

* ```

|

||||

/sbin/zinit init

|

||||

```

|

||||

```

|

||||

/sbin/zinit init

|

||||

```

|

||||

* Choose a 3Node to deploy on

|

||||

* Click `Deploy`

|

||||

|

||||

That's it! You can now SSH into your Debian deployment and change the world one line of code at a time!

|

||||

|

||||

*

|

||||

|

||||

## Conclusion

|

||||

|

||||

In this case study, we've seen the overall process of creating a new flist to deploy a Debian workload on a Micro VM on the ThreeFold Playground.

|

||||

|

||||

@@ -616,25 +616,25 @@ You now have access to the Docker Hub from your local computer. We will then pro

|

||||

* Make sure the Docker Daemon is running

|

||||

* Build the docker container (note that, while the tag is optional, it can help to track different versions)

|

||||

* Template:

|

||||

* ```

|

||||

```

|

||||

docker build -t <docker_username>/<docker_repo_name>:<tag> .

|

||||

```

|

||||

* Example:

|

||||

* ```

|

||||

```

|

||||

docker build -t dockerhubuser/nextcloudaio .

|

||||

```

|

||||

* Push the docker container to the [Docker Hub](https://hub.docker.com/)

|

||||

* Template:

|

||||

* ```

|

||||

```

|

||||

docker push <your_username>/<docker_repo_name>

|

||||

```

|

||||

* Example:

|

||||

* ```

|

||||

```

|

||||

docker push dockerhubuser/nextcloudaio

|

||||

```

|

||||

* You should now see your docker image on the [Docker Hub](https://hub.docker.com/) when you go into the menu option `My Profile`.

|

||||

* Note that you can access this link quickly with the following template:

|

||||

* ```

|

||||

```

|

||||

https://hub.docker.com/u/<account_name>

|

||||

```

|

||||

|

||||

@@ -656,11 +656,11 @@ We will now convert the Docker image into a Zero-OS flist.

|

||||

* Under `Name`, you will see all your available flists.

|

||||

* Right-click on the flist you want and select `Copy Clean Link`. This URL will be used when deploying on the ThreeFold Playground. We show below the template and an example of what the flist URL looks like.

|

||||

* Template:

|

||||

* ```

|

||||

```

|

||||

https://hub.grid.tf/<3BOT_name.3bot>/<docker_username>-<docker_image_name>-<tagname>.flist

|

||||

```

|

||||

* Example:

|

||||

* ```

|

||||

```

|

||||

threefoldtech-nextcloudaio-latest.flist

|

||||

```

|

||||

|

||||

@@ -833,19 +833,20 @@ output "fqdn" {

|

||||

We now deploy Nextcloud with Terraform. Make sure that you are in the correct folder containing the main and variables files.

|

||||

|

||||

* Initialize Terraform:

|

||||

* ```

|

||||

terraform init

|

||||

```

|

||||

```

|

||||

terraform init

|

||||

```

|

||||

|

||||

* Apply Terraform to deploy Nextcloud:

|

||||

* ```

|

||||

terraform apply

|

||||

```

|

||||

```

|

||||

terraform apply

|

||||

```

|

||||

|

||||

Note that, at any moment, if you want to see the information on your Terraform deployment, write the following:

|

||||

* ```

|

||||

terraform show

|

||||

```

|

||||

|

||||

```

|

||||

terraform show

|

||||

```

|

||||

|

||||

## Nextcloud Setup

|

||||

|

||||

|

||||

@@ -118,25 +118,25 @@ See example below.

|

||||

|

||||

The main template to request information from the API is the following:

|

||||

|

||||

```bash

|

||||

```

|

||||

curl -H "Authorization: bearer <API_token>" https://hub.grid.tf/api/flist/me/<flist_name> -X <COMMAND>

|

||||

```

|

||||

|

||||

For example, if we take the command `DELETE` of the previous section and we want to delete the flist `example-latest.flist` with the API Token `abc12`, we would write the following line:

|

||||

|

||||

```bash

|

||||

```

|

||||

curl -H "Authorization: bearer abc12" https://hub.grid.tf/api/flist/me/example-latest.flist -X DELETE

|

||||

```

|

||||

|

||||

As another template example, if we wanted to rename the flist `current-name-latest.flist` to `new-name-latest.flist`, we would use the following template:

|

||||

|

||||

```bash

|

||||

```

|

||||

curl -H "Authorization: bearer <API_token>" https://hub.grid.tf/api/flist/me/<current_flist_name>/rename/<new_flist_name> -X GET

|

||||

```

|

||||

|

||||

To upload an flist to the ZOS Hub, you would use the following template:

|

||||

|

||||

```bash

|

||||

```

|

||||

curl -H "Authorization: bearer <API_Token>" -X POST -F file=@my-local-archive.tar.gz \

|

||||

https://hub.grid.tf/api/flist/me/upload

|

||||

```

|

||||

@@ -22,19 +22,19 @@ Make sure that you have at least Go 1.19 installed on your machine.

|

||||

## Steps

|

||||

|

||||

* Create a new directory

|

||||

* ```bash

|

||||

```bash

|

||||

mkdir tf_go_client

|

||||

```

|

||||

* Change directory

|

||||

* ```bash

|

||||

```bash

|

||||

cd tf_go_client

|

||||

```

|

||||

* Creates a **go.mod** file to track the code's dependencies

|

||||

* ```bash

|

||||

```bash

|

||||

go mod init main

|

||||

```

|

||||

* Install the Grid3 Go Client

|

||||

* ```bash

|

||||

```bash

|

||||

go get github.com/threefoldtech/tfgrid-sdk-go/grid-client

|

||||

```

|

||||

|

||||

|

||||

@@ -64,83 +64,83 @@ You can use the start script to start all services and then set a cron job to ex

|

||||

You can set a cron job to execute a script running rsync to create the snapshots and generate logs at a given interval.

|

||||

|

||||

- First download the script.

|

||||

- Main net

|

||||

```

|

||||

wget https://github.com/threefoldtech/grid_deployment/blob/development/grid-snapshots/mainnet/create_snapshot.sh

|

||||

```

|

||||

- Test net

|

||||

```

|

||||

wget https://github.com/threefoldtech/grid_deployment/blob/development/grid-snapshots/testnet/create_snapshot.sh

|

||||

```

|

||||

- Dev net

|

||||

```

|

||||

wget https://github.com/threefoldtech/grid_deployment/blob/development/grid-snapshots/devnet/create_snapshot.sh

|

||||

```

|

||||

- Main net

|

||||

```

|

||||

wget https://github.com/threefoldtech/grid_deployment/blob/development/grid-snapshots/mainnet/create_snapshot.sh

|

||||

```

|

||||

- Test net

|

||||

```

|

||||

wget https://github.com/threefoldtech/grid_deployment/blob/development/grid-snapshots/testnet/create_snapshot.sh

|

||||

```

|

||||

- Dev net

|

||||

```

|

||||

wget https://github.com/threefoldtech/grid_deployment/blob/development/grid-snapshots/devnet/create_snapshot.sh

|

||||

```

|

||||

- Set the permissions of the script

|

||||

```

|

||||

chmod +x create_snapshot.sh

|

||||

```

|

||||

```

|

||||

chmod +x create_snapshot.sh

|

||||

```

|

||||

- Make sure to a adjust the snapshot creation script for your specific deployment

|

||||

- Set a cron job

|

||||

```

|

||||

crontab -e

|

||||

```

|

||||

```

|

||||

crontab -e

|

||||

```

|

||||

- Here is an example of a cron job where we execute the script every day at 1 AM and send the logs to `/var/log/snapshots/snapshots-cron.log`.

|

||||

```sh

|

||||

0 1 * * * sh /opt/snapshots/create-snapshot.sh > /var/log/snapshots/snapshots-cron.log 2>&1

|

||||

```

|

||||

```sh

|

||||

0 1 * * * sh /opt/snapshots/create-snapshot.sh > /var/log/snapshots/snapshots-cron.log 2>&1

|

||||

```

|

||||

|

||||

### Start All the Services

|

||||

|

||||

You can start all services by running the provided scripts.

|

||||

|

||||

- Download the script.

|

||||

- Main net

|

||||

```

|

||||

wget https://github.com/threefoldtech/grid_deployment/blob/development/grid-snapshots/mainnet/startall.sh

|

||||

```

|

||||

- Test net

|

||||

```

|

||||

wget https://github.com/threefoldtech/grid_deployment/blob/development/grid-snapshots/testnet/startall.sh

|

||||

```

|

||||

- Dev net

|

||||

```

|

||||

wget https://github.com/threefoldtech/grid_deployment/blob/development/grid-snapshots/devnet/startall.sh

|

||||

```

|

||||

- Main net

|

||||

```

|

||||

wget https://github.com/threefoldtech/grid_deployment/blob/development/grid-snapshots/mainnet/startall.sh

|

||||

```

|

||||

- Test net

|

||||

```

|

||||

wget https://github.com/threefoldtech/grid_deployment/blob/development/grid-snapshots/testnet/startall.sh

|

||||

```

|

||||

- Dev net

|

||||

```

|

||||

wget https://github.com/threefoldtech/grid_deployment/blob/development/grid-snapshots/devnet/startall.sh

|

||||

```

|

||||

- Set the permissions of the script

|

||||

```

|

||||

chmod +x startall.sh

|

||||

```

|

||||

```

|

||||

chmod +x startall.sh

|

||||

```

|

||||

- Run the script to start all services via docker engine.

|

||||

```

|

||||

./startall.sh

|

||||

```

|

||||

```

|

||||

./startall.sh

|

||||

```

|

||||

|

||||

### Stop All the Services

|

||||

|

||||

You can stop all services by running the provided scripts.

|

||||

|

||||

- Download the script.

|

||||

- Main net

|

||||

```

|

||||

wget https://github.com/threefoldtech/grid_deployment/blob/development/grid-snapshots/mainnet/stopall.sh

|

||||

```

|

||||

- Test net

|

||||

```

|

||||

wget https://github.com/threefoldtech/grid_deployment/blob/development/grid-snapshots/testnet/stopall.sh

|

||||

```

|

||||

- Dev net

|

||||

```

|

||||

wget https://github.com/threefoldtech/grid_deployment/blob/development/grid-snapshots/devnet/stopall.sh

|

||||

```

|

||||

- Main net

|

||||

```

|

||||

wget https://github.com/threefoldtech/grid_deployment/blob/development/grid-snapshots/mainnet/stopall.sh

|

||||

```

|

||||

- Test net

|

||||

```

|

||||

wget https://github.com/threefoldtech/grid_deployment/blob/development/grid-snapshots/testnet/stopall.sh

|

||||

```

|

||||

- Dev net

|

||||

```

|

||||

wget https://github.com/threefoldtech/grid_deployment/blob/development/grid-snapshots/devnet/stopall.sh

|

||||

```

|

||||

- Set the permissions of the script

|

||||

```

|

||||

chmod +x stopall.sh

|

||||

```

|

||||

```

|

||||

chmod +x stopall.sh

|

||||

```

|

||||

- Run the script to stop all services via docker engine.

|

||||

```

|

||||

./stopall.sh

|

||||

```

|

||||

```

|

||||

./stopall.sh

|

||||

```

|

||||

|

||||

## Expose the Snapshots with Rsync

|

||||

|

||||

|

||||

@@ -4,7 +4,7 @@ We present in this section of the developers book a partial list of system compo

|

||||

|

||||

<h2> Table of Contents </h2>

|

||||

|

||||

- [Reliable Message Bus (RMB)](rmb_toc.md)

|

||||

- [Reliable Message Bus - RMB](rmb_toc.md)

|

||||

- [Introduction to RMB](rmb_intro.md)

|

||||

- [RMB Specs](rmb_specs.md)

|

||||

- [RMB Peer](peer.md)

|

||||

|

||||

@@ -14,7 +14,7 @@

|

||||

- [Building](#building)

|

||||

- [Running tests](#running-tests)

|

||||

|

||||

***

|

||||

---

|

||||

|

||||

## What is RMB

|

||||

|

||||

@@ -27,7 +27,7 @@ Out of the box RMB provides the following:

|

||||

- Support for 3rd party hosted relays. Anyone can host a relay and people can use it safely since there is no way messages can be inspected while using e2e. That's similar to `home` servers by `matrix`

|

||||

|

||||

|

||||

***

|

||||

|

||||

## Why

|

||||

|

||||

RMB is developed by ThreefoldTech to create a global network of nodes that are available to host capacity. Each node will act like a single bot where you can ask to host your capacity. This enforced a unique set of requirements:

|

||||

@@ -45,17 +45,17 @@ Starting from this we came up with a more detailed requirements:

|

||||

- Then each message then can be signed by the `bot` keys, hence make it easy to verify the identity of the sender of a message. This is done both ways.

|

||||

- To support federation (using 3rd party relays) we needed to add e2e encryption to make sure messages that are surfing the public internet can't be sniffed

|

||||

- e2e encryption is done by deriving an encryption key from the same identity seed, and share the public key on `tfchain` hence it's available to everyone to use

|

||||

***

|

||||

|

||||

## Specifications

|

||||

|

||||

For details about protocol itself please check the [specs](rmb_specs.md).

|

||||

***

|

||||

|

||||

## How to Use RMB

|

||||

|

||||

There are many ways to use `rmb` because it was built for `bots` and software to communicate. Hence, there is no mobile app for it for example, but instead a set of libraries where you can use to connect to the network, make chitchats with other bots then exit.

|

||||

|

||||

Or you can keep the connection forever to answer other bots requests if you are providing a service.

|

||||

***

|

||||

|

||||

## Libraries

|

||||

|

||||

If there is a library in your preferred language, then you are in luck! Simply follow the library documentations to implement a service bot, or to make requests to other bots.

|

||||

@@ -64,14 +64,14 @@ If there is a library in your preferred language, then you are in luck! Simply f

|

||||

|

||||

- Golang [rmb-sdk-go](https://github.com/threefoldtech/rmb-sdk-go)

|

||||

- Typescript [rmb-sdk-ts](https://github.com/threefoldtech/rmb-sdk-ts)

|

||||

***

|

||||

|

||||

### No Known Libraries

|

||||

|

||||

If there are no library in your preferred language, here's what you can do:

|

||||

|

||||

- Implement a library in your preferred language

|

||||

- If it's too much to do all the signing, verification, e2e in your language then use `rmb-peer`

|

||||

***

|

||||

|

||||

## What is rmb-peer

|

||||

|

||||

think of `rmb-peer` as a gateway that stands between you and the `relay`. `rmb-peer` uses your mnemonics (your identity secret key) to assume your identity and it connects to the relay on your behalf, it maintains the connection forever and takes care of

|

||||

@@ -85,11 +85,11 @@ Then it provide a simple (plain-text) api over `redis`. means to send messages (

|

||||

|

||||

> More details can be found [here](rmb_specs.md)

|

||||

|

||||

***

|

||||

|

||||

## Download

|

||||

|

||||

Please check the latest [releases](https://github.com/threefoldtech/rmb-rs/releases) normally you only need the `rmb-peer` binary, unless you want to host your own relay.

|

||||

***

|

||||

|

||||

## Building

|

||||

|

||||

```bash

|

||||

@@ -97,7 +97,7 @@ git clone git@github.com:threefoldtech/rmb-rs.git

|

||||

cd rmb-rs

|

||||

cargo build --release --target=x86_64-unknown-linux-musl

|

||||

```

|

||||

***

|

||||

|

||||

## Running tests

|

||||

|

||||

While inside the repository

|

||||

|

||||

@@ -15,7 +15,7 @@

|

||||

- [End2End Encryption](#end2end-encryption)

|

||||

- [Rate Limiting](#rate-limiting)

|

||||

|

||||

***

|

||||

---

|

||||

|

||||

# Introduction

|

||||

|

||||

@@ -51,7 +51,7 @@ On the relay, the relay checks federation information set on the envelope and th

|

||||

When the relay receive a message that is destined to a `local` connected client, it queue it for delivery. The relay can maintain a queue of messages per twin to a limit. If the twin does not come back online to consume queued messages, the relay will start to drop messages for that specific twin client.

|

||||

|

||||

Once a twin come online and connect to its peer, the peer will receive all queued messages. the messages are pushed over the web-socket as they are received. the client then can decide how to handle them (a message can be a request or a response). A message type can be inspected as defined by the schema.

|

||||

***

|

||||

|

||||

# Overview of the Operation of RMB Relay

|

||||

|

||||

|

||||

@@ -201,7 +201,6 @@ A response message is defined as follows this is what is sent as a response by a

|

||||

|

||||

Your bot (server) need to make sure to set `destination` to the same value as the incoming request `source`

|

||||

|

||||

The

|

||||

> this response is what is pushed to `msgbus.system.reply`

|

||||

|

||||

```rust

|

||||

@@ -223,7 +222,7 @@ pub struct JsonOutgoingResponse {

|

||||

pub error: Option<JsonError>,

|

||||

}

|

||||

```

|

||||

***

|

||||

|

||||

# End2End Encryption

|

||||

|

||||

Relay is totally opaque to the messages. Our implementation of the relay does not poke into messages except for the routing attributes (source, and destinations addresses, and federation information). But since the relay is designed to be hosted by other 3rd parties (hence federation) you should

|

||||

@@ -246,7 +245,7 @@ As you already understand e2e is completely up to the peers to implement, and ev

|

||||

- derive the same shared key

|

||||

- `shared = ecdh(B.sk, A.pk)`

|

||||

- `plain-data = aes-gcm.decrypt(shared-key, nonce, encrypted)`

|

||||

***

|

||||

|

||||

# Rate Limiting

|

||||

|

||||

To avoid abuse of the server, and prevent DoS attacks on the relay, a rate limiter is used to limit the number of clients' requests.\

|

||||

|

||||

@@ -55,18 +55,16 @@ yarn add @threefold/grid_client

|

||||

To use the Grid Client locally, clone the repository then install the Grid Client:

|

||||

|

||||

- Clone the repository

|

||||

- ```bash

|

||||

git clone https://github.com/threefoldtech/tfgrid-sdk-ts

|

||||

```

|

||||

- Install the Grid Client

|

||||

- With yarn

|

||||

- ```bash

|

||||

yarn install

|

||||

```

|

||||

- With npm

|

||||

- ```bash

|

||||

npm install

|

||||

```

|

||||

```bash

|

||||

git clone https://github.com/threefoldtech/tfgrid-sdk-ts

|

||||

```

|

||||

- Install the Grid Client with yarn or npm

|

||||

```bash

|

||||

yarn install

|

||||

```

|

||||

```bash

|

||||

npm install

|

||||

```

|

||||

|

||||

> Note: In the directory **grid_client/scripts**, we provided a set of scripts to test the Grid Client.

|

||||

|

||||

@@ -94,11 +92,11 @@ Make sure to set the client configuration properly before using the Grid Client.

|

||||

The easiest way to test the installation is to run the following command with either yarn or npm to generate the Grid Client documentation:

|

||||

|

||||

* With yarn

|

||||

* ```

|

||||

```

|

||||

yarn run serve-docs

|

||||

```

|

||||

* With npm

|

||||

* ```

|

||||

```

|

||||

npm run serve-docs

|

||||

```

|

||||

|

||||

@@ -109,15 +107,13 @@ The easiest way to test the installation is to run the following command with ei

|

||||

You can explore the Grid Client by testing the different scripts proposed in **grid_client/scripts**.

|

||||

|

||||

- Update your customized deployments specs if needed

|

||||

- Run using [ts-node](https://www.npmjs.com/ts-node)

|

||||

- With yarn

|

||||

- ```bash

|

||||

yarn run ts-node --project tsconfig-node.json scripts/zdb.ts

|

||||

```

|

||||

- With npx

|

||||

- ```bash

|

||||

npx ts-node --project tsconfig-node.json scripts/zdb.ts

|

||||

```

|

||||

- Run using [ts-node](https://www.npmjs.com/ts-node) with yarn or npx

|

||||

```bash

|

||||

yarn run ts-node --project tsconfig-node.json scripts/zdb.ts

|

||||

```

|

||||

```bash

|

||||

npx ts-node --project tsconfig-node.json scripts/zdb.ts

|

||||

```

|

||||

|

||||

## Reference API

|

||||

|

||||

|

||||

@@ -60,33 +60,33 @@ To start the services for development or testing make sure first you have all th

|

||||

|

||||

- Clone this repo

|

||||

|

||||

```bash

|

||||

```

|

||||

git clone https://github.com/threefoldtech/tfgrid-sdk-go.git

|

||||

cd tfgrid-sdk-go/grid-proxy

|

||||

```

|

||||

|

||||

- The `Makefile` has all that you need to deal with Db, Explorer, Tests, and Docs.

|

||||

|

||||

```bash

|

||||

```

|

||||

make help # list all the available subcommands.

|

||||

```

|

||||

|

||||

- For a quick test explorer server.

|

||||

|

||||

```bash

|

||||

```

|

||||

make all-start e=<MNEMONICS>

|

||||

```

|

||||

|

||||

Now you can access the server at `http://localhost:8080`

|

||||

- Run the tests

|

||||

|

||||

```bash

|

||||

```

|

||||

make test-all

|

||||

```

|

||||

|

||||

- Generate docs.

|

||||

|

||||

```bash

|

||||

```

|

||||

make docs

|

||||

```

|

||||

|

||||

@@ -108,7 +108,7 @@ For more illustrations about the commands needed to work on the project, see the

|

||||

|

||||

- You can either build the project:

|

||||

|

||||

```bash

|

||||

```

|

||||

make build

|

||||

chmod +x cmd/proxy_server/server \

|

||||

&& mv cmd/proxy_server/server /usr/local/bin/gridproxy-server

|

||||

@@ -117,7 +117,7 @@ For more illustrations about the commands needed to work on the project, see the

|

||||

- Or download a release:

|

||||

Check the [releases](https://github.com/threefoldtech/tfgrid-sdk-go/releases) page and edit the next command with the chosen version.

|

||||

|

||||

```bash

|

||||

```

|

||||

wget https://github.com/threefoldtech/tfgrid-sdk-go/releases/download/v1.6.7-rc2/tfgridclient_proxy_1.6.7-rc2_linux_amd64.tar.gz \

|

||||

&& tar -xzf tfgridclient_proxy_1.6.7-rc2_linux_amd64.tar.gz \

|

||||

&& chmod +x server \

|

||||

@@ -128,7 +128,7 @@ For more illustrations about the commands needed to work on the project, see the

|

||||

|

||||

- Create the service file

|

||||

|

||||

```bash

|

||||

```

|

||||

cat << EOF > /etc/systemd/system/gridproxy-server.service

|

||||

[Unit]

|

||||

Description=grid proxy server

|

||||

|

||||

@@ -21,17 +21,17 @@ TFCMD is available as binaries. Make sure to download the latest release and to

|

||||

An easy way to use TFCMD is to download and extract the TFCMD binaries to your path.

|

||||

|

||||

- Download latest release from [releases](https://github.com/threefoldtech/tfgrid-sdk-go/releases)

|

||||

- ```

|

||||

wget <binaries_url>

|

||||

```

|

||||

```

|

||||

wget <binaries_url>

|

||||

```

|

||||

- Extract the binaries

|

||||

- ```

|

||||

tar -xvf <binaries_file>

|

||||

```

|

||||

```

|

||||

tar -xvf <binaries_file>

|

||||

```

|

||||

- Move `tfcmd` to any `$PATH` directory:

|

||||

```bash

|

||||

mv tfcmd /usr/local/bin

|

||||

```

|

||||

```

|

||||

mv tfcmd /usr/local/bin

|

||||

```

|

||||

|

||||

## Login

|

||||

|

||||

|

||||

@@ -23,14 +23,14 @@ To install TFROBOT, simply download and extract the TFROBOT binaries to your pat

|

||||

cd tfgrid-sdk-go

|

||||

```

|

||||

- Download latest release from [releases](https://github.com/threefoldtech/tfgrid-sdk-go/releases)

|

||||

- ```

|

||||

```

|

||||

wget https://github.com/threefoldtech/tfgrid-sdk-go/releases/download/v0.14.4/tfgrid-sdk-go_Linux_x86_64.tar.gz

|

||||

```

|

||||

- Extract the binaries

|

||||

- ```

|

||||

```

|

||||

tar -xvf tfgrid-sdk-go_Linux_x86_64.tar.gz

|

||||

```

|

||||

- Move `tfrobot` to any `$PATH` directory:

|

||||

```bash

|

||||

```

|

||||

mv tfrobot /usr/local/bin

|

||||

```

|

||||

@@ -743,18 +743,18 @@ To learn more about this process, [watch this great video](https://youtu.be/axvK

|

||||

If you've already done an SSH connection on your computer, the issue is most probably that the "host key has just been changed". To fix this, try one of those two solutions:

|

||||

|

||||

* Linux and MAC:

|

||||

* ```

|

||||

```

|

||||

sudo rm ~/.ssh/known_hosts

|

||||

```

|

||||

* Windows:

|

||||

* ```

|

||||

```

|

||||

rm ~/.ssh/known_hosts

|

||||

```

|

||||

|

||||

To be more specific, you can remove the probematic host:

|

||||

|

||||

* Windows, Linux and MAC:

|

||||

* ```

|

||||

```

|

||||

ssh-keygen -R <host>

|

||||

```

|

||||

|

||||

@@ -2074,7 +2074,7 @@ There can be many different fixes for this error. Here are some troubleshooting

|

||||

* [Flash the RAID controller](https://fohdeesha.com/docs/perc.html) (i.e. crossflashing), OR;

|

||||

* Change the controller to a Dell H310 controller (for Dell servers)

|

||||

* Try the command **badblocks** (replace **sda** with your specific disk). Note that this command will delete all the data on the disk

|

||||

* ```

|

||||

```

|

||||

sudo badblocks -svw -b 512 -t 0x00 /dev/sda

|

||||

```

|

||||

|

||||

@@ -2094,7 +2094,7 @@ Anyone experiencing frequently this issue where Z-OS sometimes detects an SSD as

|

||||

|

||||

* Boot a Ubuntu Linux live USB

|

||||

* Install **gnome-disks** if it isn't already installed:

|

||||

* ```

|

||||

```

|

||||

sudo apt install gnome-disks

|

||||

```

|

||||

* Open the application launcher and search for **Disks**

|

||||

@@ -2161,15 +2161,13 @@ Many different reasons can cause this issue. When you get that error, sometimes

|

||||

|

||||

* Fix 1:

|

||||

* Force the wiping of the disk:

|

||||

* ```

|

||||

```

|

||||

sudo wipefs -af /dev/sda

|

||||

```

|

||||

* Fix 2:

|

||||

* Unmount the disk then wipe it:

|

||||

* ```

|

||||

sudo umount /dev/sda

|

||||

```

|

||||

* ```

|

||||

sudo umount /dev/sda

|

||||

sudo wipefs -a /dev/sda

|

||||

```

|

||||

|

||||

|

||||

@@ -7,7 +7,7 @@

|

||||

- [Burn the Zero-OS Bootstrap Image](#burn-the-zero-os-bootstrap-image)

|

||||

- [CD/DVD BIOS](#cddvd-bios)

|

||||

- [USB Key BIOS+UEFI](#usb-key-biosuefi)

|

||||

- [BalenaEtcher (MAC, Linux, Windows)](#balenaetcher-mac-linux-windows)

|

||||

- [BalenaEtcher - MAC, Linux, Windows](#balenaetcher---mac-linux-windows)

|

||||

- [CLI (Linux)](#cli-linux)

|

||||

- [Rufus (Windows)](#rufus-windows)

|

||||

- [Additional Information (Optional)](#additional-information-optional)

|

||||

@@ -70,7 +70,7 @@ For the BIOS **ISO** image, download the file and burn it on a DVD.

|

||||

|

||||

There are many ways to burn the bootstrap image on a USB key. The easiest way that works for all operating systems is to use BalenaEtcher. We also provide other methods.

|

||||

|

||||

#### BalenaEtcher (MAC, Linux, Windows)

|

||||

#### BalenaEtcher - MAC, Linux, Windows

|

||||

|

||||

For **MAC**, **Linux** and **Windows**, you can use [BalenaEtcher](https://www.balena.io/etcher/) to load/flash the image on a USB stick. This program also formats the USB in the process. This will work for the option **EFI IMG** for UEFI boot, and with the option **USB** for BIOS boot. Simply follow the steps presented to you and make sure you select the bootstrap image file you downloaded previously.

|

||||

|

||||

|

||||

@@ -35,7 +35,7 @@ We cover the basic steps to install the GPU on your 3Node.

|

||||

* Install the GPU on the server

|

||||

* Note: You might need to move or remove some pieces of your server to make room for the GPU

|

||||

* (Optional) Boot the 3Node with a Linux distro (e.g. Ubuntu) and use the terminal to check if the GPU is recognized by the system

|

||||

* ```

|

||||

```

|

||||

sudo lshw -C Display

|

||||

```

|

||||

* Output example with an AMD Radeon (on the line `product: ...`)

|

||||

|

||||

Binary file not shown.

|

After Width: | Height: | Size: 9.0 KiB |

@@ -35,64 +35,4 @@ The ThreeFold Alpha minting tool will present the following information for each

|

||||

- SRU

|

||||

- HRU

|

||||

- TFT Farmed

|

||||

- Payout Address

|

||||

|

||||

<!-- NOTE: This is removed from the new dashboard, but might be brought back.

|

||||

|

||||

## Introduction

|

||||

|

||||

You can easily consult minting receipts of all your 3Nodes on the ThreeFold Dashboard to get essential minting information of your 3Nodes and your ThreeFold farm. With your minting receipt hash, you can then query the ThreeFold Alpha minting tool for further minting information.

|

||||

|

||||

## Download Minting Receipts of Your Farm

|

||||

|

||||

You can download minting receipts of your whole farm directly on the ThreeFold Dashboard.

|

||||

|

||||

- On the [ThreeFold Dashboard](https://dashboard.grid.tf/), go to **TFChain** -> **TF Minting Reports**

|

||||

- In the section **Your Farms**, on the left of your **Farm ID**, click on the down arrow button

|

||||

- Click on **Download Minting Receipts**

|

||||

|

||||

## Download Minting Receipts of a 3Node

|

||||

|

||||

You can download minting receipts of a single 3Node directly on the ThreeFold Dashboard.

|

||||

|

||||

- On the [ThreeFold Dashboard](https://dashboard.grid.tf/), go to **Portal** -> **Farms**

|

||||

- In the section **Your Farm Nodes**, on the left of your **Node ID**, click on the down arrow button

|

||||

- Click on **Node Statistics**

|

||||

- Click on **Download Node Receipt**

|

||||

|

||||

## Minting Receipts Information

|

||||

|

||||

The minting receipts contain the following information:

|

||||

|

||||

- Minting: <minting_receipt_hash>

|

||||

- start: <start of minting period>

|

||||

- end: <end of minting period>

|

||||

- TFT: <TFT minted by the 3Node>

|

||||

- Cloud Units: <3Node Resources>

|

||||

|

||||

## Alpha Minting Tool

|

||||

|

||||

You can query additional minting information by using the [Dashboard Alpha Minting tool](https://dashboard.grid.tf/other/minting).

|

||||

|

||||

- Download the minting receipts of your farm or of a single 3Node

|

||||

- Copy a minting receipt hash

|

||||

- Open the ThreeFold Alpha Minting tool by clicking on **Minting** on the left-side [ThreeFold Dashboard](https://dashboard.grid.tf/) menu

|

||||

- Paste the minting receipt hash

|

||||

|

||||

The ThreeFold Alpha minting tool will present the following information for each minting receipt hash:

|

||||

|

||||

- Node ID

|

||||

- Farm Name

|

||||

- Measured Uptime

|

||||

- Node Resources

|

||||

- CU

|

||||

- SU

|

||||

- NU

|

||||

- CRU

|

||||

- MRU

|

||||

- SRU

|

||||

- HRU

|

||||

- TFT Farmed

|

||||

- Payout Address

|

||||

|

||||

-->

|

||||

- Payout Address

|

||||

@@ -142,7 +142,7 @@ Once you've verified that the Farmerbot runs properly, you can stop the Farmerbo

|

||||

It is highly recommended to set a Ubuntu systemd service to keep the Farmerbot running after exiting the VM.

|

||||

|

||||

* Create the service file

|

||||

* ```

|

||||

```

|

||||

nano /etc/systemd/system/farmerbot.service

|

||||

```

|

||||

* Set the Farmerbot systemd service

|

||||

|

||||

@@ -30,7 +30,7 @@ We start by deploying a full VM on the ThreeFold Playground.

|

||||

* Minimum storage: 50GB

|

||||

* After deployment, note the VM IPv4 address

|

||||

* Connect to the VM via SSH

|

||||

* ```

|

||||

```

|

||||

ssh root@VM_IPv4_address

|

||||

```

|

||||

|

||||

@@ -39,39 +39,39 @@ We start by deploying a full VM on the ThreeFold Playground.

|

||||

We create a root-access user. Note that this step is optional.

|

||||

|

||||

* Once connected, create a new user with root access (for this guide we use "newuser")

|

||||

* ```

|

||||

```

|

||||

adduser newuser

|

||||

```

|

||||

* You should now see the new user directory

|

||||

* ```

|

||||

```

|

||||

ls /home

|

||||

```

|

||||

* Give sudo capacity to the new user

|

||||

* ```

|

||||

```

|

||||

usermod -aG sudo newuser

|

||||

```

|

||||

* Switch to the new user

|

||||

* ```

|

||||

```

|

||||

su - newuser

|

||||

```

|

||||

* Create a directory to store the public key

|

||||

* ```

|

||||

```

|

||||

mkdir ~/.ssh

|

||||

```

|

||||

* Give read, write and execute permissions for the directory to the new user

|

||||

* ```

|

||||

```

|

||||

chmod 700 ~/.ssh

|

||||

```

|

||||

* Add the SSH public key in the file **authorized_keys** and save it

|

||||

* ```

|

||||

```

|

||||

nano ~/.ssh/authorized_keys

|

||||

```

|

||||

* Exit the VM

|

||||

* ```

|

||||

```

|

||||

exit

|

||||

```

|

||||

* Reconnect with the new user

|

||||

* ```

|

||||

```

|

||||

ssh newuser@VM_IPv4_address

|

||||

```

|

||||

|

||||

@@ -81,19 +81,19 @@ We set a firewall to monitor and control incoming and outgoing network traffic.

|

||||

For our security rules, we want to allow SSH, HTTP and HTTPS (443 and 8443).

|

||||

We thus add the following rules:

|

||||

* Allow SSH (port 22)

|

||||

* ```

|

||||

```

|

||||

sudo ufw allow ssh

|

||||

```

|

||||

* Allow port 4001

|

||||

* ```

|

||||

```

|

||||

sudo ufw allow 4001

|

||||

```

|

||||

* To enable the firewall, write the following:

|

||||

* ```

|

||||

```

|

||||

sudo ufw enable

|

||||

```

|

||||

* To see the current security rules, write the following:

|

||||

* ```

|

||||

```

|

||||

sudo ufw status verbose

|

||||

```

|

||||

You now have enabled the firewall with proper security rules for your IPFS deployment.

|

||||

@@ -109,23 +109,23 @@ If you want to run pubsub capabilities, you need to allow **port 8081**. For mor

|

||||

|

||||

We install the [IPFS Kubo binary](https://docs.ipfs.tech/install/command-line/#install-official-binary-distributions).

|

||||

* Download the binary

|

||||

* ```

|

||||

```

|

||||

wget https://dist.ipfs.tech/kubo/v0.24.0/kubo_v0.24.0_linux-amd64.tar.gz

|

||||

```

|

||||

* Unzip the file

|

||||

* ```

|

||||

```

|

||||

tar -xvzf kubo_v0.24.0_linux-amd64.tar.gz

|

||||

```

|

||||

* Change directory

|

||||

* ```

|

||||

```

|

||||

cd kubo

|

||||

```

|

||||

* Run the install script

|

||||

* ```

|

||||

```

|

||||

sudo bash install.sh

|

||||

```

|

||||

* Verify that IPFS Kubo is properly installed

|

||||

* ```

|

||||

```

|

||||

ipfs --version

|

||||

```

|

||||

|

||||

@@ -134,23 +134,23 @@ We install the [IPFS Kubo binary](https://docs.ipfs.tech/install/command-line/#i

|

||||

We initialize IPFS and run the IPFS daemon.

|

||||

|

||||

* Initialize IPFS

|

||||

* ```

|

||||

```

|

||||

ipfs init --profile server

|

||||

```

|

||||

* Increase the storage capacity (optional)

|

||||

* ```

|

||||

```

|

||||

ipfs config Datastore.StorageMax 30GB

|

||||

```

|

||||

* Run the IPFS daemon

|

||||

* ```

|

||||

```

|

||||

ipfs daemon

|

||||

```

|

||||

* Set an Ubuntu systemd service to keep the IPFS daemon running after exiting the VM

|

||||

* ```

|

||||

```

|

||||

sudo nano /etc/systemd/system/ipfs.service

|

||||

```

|

||||

* Enter the systemd info

|

||||

* ```

|

||||

```

|

||||

[Unit]

|

||||

Description=IPFS Daemon

|

||||

[Service]

|

||||

@@ -163,27 +163,27 @@ We initialize IPFS and run the IPFS daemon.

|

||||

WantedBy=multi-user.target

|

||||

```

|

||||

* Enable the service

|

||||

* ```

|

||||

```

|

||||

sudo systemctl daemon-reload

|

||||

sudo systemctl enable ipfs

|

||||

sudo systemctl start ipfs

|

||||

```

|

||||

* Verify that the IPFS daemon is properly running

|

||||

* ```

|

||||

```

|

||||

sudo systemctl status ipfs

|

||||

```

|

||||

## Final Verification

|

||||

We reboot and reconnect to the VM and verify that IPFS is properly running as a final verification.

|

||||

* Reboot the VM

|

||||

* ```

|

||||

```

|

||||

sudo reboot

|

||||

```

|

||||

* Reconnect to the VM

|

||||

* ```

|

||||

```

|

||||

ssh newuser@VM_IPv4_address

|

||||

```

|

||||

* Check that the IPFS daemon is running

|

||||

* ```

|

||||

```

|

||||

ipfs swarm peers

|

||||

```

|

||||

## Questions and Feedback

|

||||

|

||||

@@ -31,7 +31,7 @@ We start by deploying a micro VM on the ThreeFold Playground.

|

||||

* Minimum storage: 50GB

|

||||

* After deployment, note the VM IPv4 address

|

||||

* Connect to the VM via SSH

|

||||

* ```

|

||||

```

|

||||

ssh root@VM_IPv4_address

|

||||

```

|

||||

|

||||

@@ -40,11 +40,11 @@ We start by deploying a micro VM on the ThreeFold Playground.

|

||||

We install the prerequisites before installing and setting IPFS.

|

||||

|

||||

* Update Ubuntu

|

||||

* ```

|

||||

```

|

||||

apt update

|

||||

```

|

||||

* Install nano and ufw

|

||||

* ```

|

||||

```

|

||||

apt install nano && apt install ufw -y

|

||||

```

|

||||

|

||||

@@ -57,20 +57,20 @@ For our security rules, we want to allow SSH, HTTP and HTTPS (443 and 8443).

|

||||

We thus add the following rules:

|

||||

|

||||

* Allow SSH (port 22)

|

||||

* ```

|

||||

```

|

||||

ufw allow ssh

|

||||

```

|

||||

* Allow port 4001

|

||||

* ```

|

||||

```

|

||||

ufw allow 4001

|

||||

```

|

||||

* To enable the firewall, write the following:

|

||||

* ```

|

||||

```

|

||||

ufw enable

|

||||

```

|

||||

|

||||

* To see the current security rules, write the following:

|

||||

* ```

|

||||

```

|

||||

ufw status verbose

|

||||

```

|

||||

|

||||

@@ -91,23 +91,23 @@ If you want to run pubsub capabilities, you need to allow **port 8081**. For mor

|

||||

We install the [IPFS Kubo binary](https://docs.ipfs.tech/install/command-line/#install-official-binary-distributions).

|

||||

|

||||

* Download the binary

|

||||

* ```

|

||||

```

|

||||

wget https://dist.ipfs.tech/kubo/v0.24.0/kubo_v0.24.0_linux-amd64.tar.gz

|

||||

```

|

||||

* Unzip the file

|

||||

* ```

|

||||

```

|

||||

tar -xvzf kubo_v0.24.0_linux-amd64.tar.gz

|

||||

```

|

||||

* Change directory

|

||||

* ```

|

||||

```

|

||||

cd kubo

|

||||

```

|

||||

* Run the install script

|

||||

* ```

|

||||

```

|

||||

bash install.sh

|

||||

```

|

||||

* Verify that IPFS Kubo is properly installed

|

||||

* ```

|

||||

```

|

||||

ipfs --version

|

||||

```

|

||||

|

||||

@@ -116,15 +116,15 @@ We install the [IPFS Kubo binary](https://docs.ipfs.tech/install/command-line/#i

|

||||

We initialize IPFS and run the IPFS daemon.

|

||||

|

||||

* Initialize IPFS

|

||||

* ```

|

||||

```

|

||||

ipfs init --profile server

|

||||

```

|

||||

* Increase the storage capacity (optional)

|

||||

* ```

|

||||

```

|

||||

ipfs config Datastore.StorageMax 30GB

|

||||

```

|

||||

* Run the IPFS daemon

|

||||

* ```

|

||||

```

|

||||

ipfs daemon

|

||||

```

|

||||

|

||||

@@ -133,19 +133,19 @@ We initialize IPFS and run the IPFS daemon.

|

||||

We set the IPFS daemon with zinit. This will make sure that the IPFS daemon starts at each VM reboot or if it stops functioning momentarily.

|

||||

|

||||

* Create the yaml file

|

||||

* ```

|

||||

```

|

||||

nano /etc/zinit/ipfs.yaml

|

||||

```

|

||||

* Set the execution command

|

||||

* ```

|

||||

```

|

||||

exec: /usr/local/bin/ipfs daemon

|

||||

```

|

||||

* Run the IPFS daemon with the zinit monitor command

|

||||

* ```

|

||||

```

|

||||

zinit monitor ipfs

|

||||

```

|

||||

* Verify that the IPFS daemon is running

|

||||

* ```

|

||||

```

|

||||

ipfs swarm peers

|

||||

```

|

||||

|

||||

@@ -154,11 +154,11 @@ We set the IPFS daemon with zinit. This will make sure that the IPFS daemon star

|

||||

We reboot and reconnect to the VM and verify that IPFS is properly running as a final verification.

|

||||

|

||||

* Reboot the VM

|

||||

* ```

|

||||

```

|

||||

reboot -f

|

||||

```

|

||||

* Reconnect to the VM and verify that the IPFS daemon is running

|

||||

* ```

|

||||

```

|

||||

ipfs swarm peers

|

||||

```

|

||||

|

||||

|

||||

@@ -24,6 +24,7 @@

|

||||

- [Become the superuser (su) on Linux](#become-the-superuser-su-on-linux)

|

||||

- [Exit a session](#exit-a-session)

|

||||

- [Know the current user](#know-the-current-user)

|

||||

- [See the path of a package](#see-the-path-of-a-package)

|

||||

- [Set the path of a package](#set-the-path-of-a-package)

|

||||

- [See the current path](#see-the-current-path-1)

|

||||

- [Find the current shell](#find-the-current-shell)

|

||||

@@ -127,11 +128,11 @@ You can also set a number of counts with `-c` on Linux and MAC and `-n` on Windo

|

||||

Here are the steps to install [Go](https://go.dev/).

|

||||

|

||||

* Install go

|

||||

* ```

|

||||

```

|

||||

sudo apt install golang-go

|

||||

```

|

||||

* Verify that go is properly installed

|

||||

* ```

|

||||

```

|

||||

go version

|

||||

```

|

||||

|

||||

@@ -142,19 +143,19 @@ Here are the steps to install [Go](https://go.dev/).

|

||||

Follow those steps to install [Brew](https://brew.sh/)

|

||||

|

||||

* Installation command from Brew:

|

||||

* ```

|

||||

```

|

||||

/bin/bash -c "$(curl -fsSL https://raw.githubusercontent.com/Homebrew/install/HEAD/install.sh)"

|

||||

```

|

||||

* Add the path to the **.profile** directory. Replace <user_name> by your username.

|

||||

* ```

|

||||

```

|

||||

echo 'eval "$(/home/linuxbrew/.linuxbrew/bin/brew shellenv)"' >> /home/<user_name>/.profile

|

||||

```

|

||||

* Evaluation the following:

|

||||

* ```

|

||||

```

|

||||

eval "$(/home/linuxbrew/.linuxbrew/bin/brew shellenv)"

|

||||

```

|

||||

* Verify the installation

|

||||

* ```

|

||||

```

|

||||

brew doctor

|

||||

```

|

||||

|

||||

@@ -163,27 +164,27 @@ Follow those steps to install [Brew](https://brew.sh/)

|

||||

### Brew basic commands

|

||||

|

||||

* To update brew in general:

|

||||

* ```

|

||||

```

|

||||

brew update

|

||||

```

|

||||

* To update a specific package:

|

||||

* ```

|

||||

```

|

||||

brew update <package_name>

|

||||

```

|

||||

* To install a package:

|

||||

* ```

|

||||

```

|

||||

brew install <package_name>

|

||||

```

|

||||

* To uninstall a package:

|

||||

* ```

|

||||

```

|

||||

brew uninstall <package_name>

|

||||

```

|

||||

* To search a package:

|

||||

* ```

|

||||

```

|

||||

brew search <package_name>

|

||||

```

|

||||

* [Uninstall Brew](https://github.com/homebrew/install#uninstall-homebrew)

|

||||

* ```

|

||||

```

|

||||

/bin/bash -c "$(curl -fsSL https://raw.githubusercontent.com/Homebrew/install/HEAD/uninstall.sh)"

|

||||

```

|

||||

|

||||

@@ -194,11 +195,11 @@ Follow those steps to install [Brew](https://brew.sh/)

|

||||

Installing Terraform with Brew is very simple by following the [Terraform documentation](https://developer.hashicorp.com/terraform/downloads).

|

||||

|

||||

* Compile HashiCorp software on Homebrew's infrastructure

|

||||

* ```

|

||||

```

|

||||

brew tap hashicorp/tap

|

||||

```

|

||||

* Install Terraform

|

||||

* ```

|

||||

```

|

||||

brew install hashicorp/tap/terraform

|

||||

```

|

||||

|

||||

@@ -207,27 +208,27 @@ Installing Terraform with Brew is very simple by following the [Terraform docume

|

||||

### Yarn basic commands

|

||||

|

||||

* Add a package

|

||||

* ```

|

||||

```

|

||||

yarn add

|

||||

```

|

||||

* Initialize the development of a package

|

||||

* ```

|

||||

```

|

||||

yarn init

|

||||

```

|

||||

* Install all the dependencies in the **package.json** file

|

||||

* ```

|

||||

```

|

||||

yarn install

|

||||

```

|

||||

* Publish a package to a package manager

|

||||

* ```

|

||||

```

|

||||

yarn publish

|

||||

```

|

||||

* Remove unused package from the current package

|

||||

* ```

|

||||

```

|

||||

yarn remove

|

||||

```

|

||||

* Clean the cache

|

||||

* ```

|

||||

```

|

||||

yarn cache clean

|

||||

```

|

||||

|

||||

@@ -260,11 +261,11 @@ ls -ld .?*

|

||||

You can use **tree** to display the files and organization of a directory:

|

||||

|

||||

* General command

|

||||

* ```

|

||||

```

|

||||

tree

|

||||

```

|

||||

* View hidden files

|

||||

* ```

|

||||

```

|

||||

tree -a

|

||||

```

|

||||

|

||||

@@ -336,10 +337,10 @@ which <application_name>

|

||||

|

||||

On MAC and Linux, you can use **coreutils** and **realpath** from Brew:

|

||||

|

||||

* ```

|

||||

```

|

||||

brew install coreutils

|

||||

```

|

||||

* ```

|

||||

```

|

||||

realpath file_name

|

||||

```

|

||||

|

||||

@@ -350,11 +351,11 @@ On MAC and Linux, you can use **coreutils** and **realpath** from Brew:

|

||||

You can use either command:

|

||||

|

||||

* Option 1

|

||||

* ```

|

||||

```

|

||||

sudo -i

|

||||

```

|

||||

* Option 2

|

||||

* ```

|

||||

```

|

||||

sudo -s

|

||||

```

|

||||

|

||||

@@ -364,10 +365,10 @@ You can use either command:

|

||||

|

||||

You can use either command depending on your shell:

|

||||

|

||||

* ```

|

||||

```

|

||||

exit

|

||||

```

|

||||

* ```

|

||||

```

|

||||

logout

|

||||

```

|

||||

|

||||

@@ -377,7 +378,7 @@ You can use either command depending on your shell:

|

||||

|

||||

You can use the following command:

|

||||

|

||||

* ```

|

||||

```

|

||||

whoami

|

||||

```

|

||||

|

||||

@@ -387,7 +388,7 @@ You can use the following command:

|

||||

|

||||

To see the path of a package, you can use the following command:

|

||||

|

||||

* ```

|

||||

```

|

||||

whereis <package_name>

|

||||

```

|

||||

|

||||

@@ -414,11 +415,11 @@ pwd

|

||||

### Find the current shell

|

||||

|

||||

* Compact version

|

||||

* ```

|

||||

```

|

||||

echo $SHELL

|

||||

```

|

||||

* Detailed version

|

||||

* ```

|

||||

```

|

||||

ls -l /proc/$$/exe

|

||||

```

|

||||

|

||||

@@ -427,35 +428,35 @@ pwd

|

||||

### SSH into Remote Server

|

||||

|

||||

* Create SSH key pair

|

||||

* ```

|

||||

```

|

||||

ssh-keygen

|

||||

```

|

||||

* Install openssh-client on the local computer*

|

||||

* ```

|

||||

```

|

||||

sudo apt install openssh-client

|

||||

```

|

||||

* Install openssh-server on the remote computer*

|

||||

* ```

|

||||

```

|

||||

sudo apt install openssh-server

|

||||

```

|

||||

* Copy public key

|

||||

* ```

|

||||

```

|

||||

cat ~/.ssh/id_rsa.pub

|

||||

```

|

||||

* Create the ssh directory on the remote computer

|

||||

* ```

|

||||

```

|

||||

mkdir ~/.ssh

|

||||

```

|

||||

* Add public key in the file **authorized_keys** on the remote computer

|

||||

* ```

|

||||

```

|

||||

nano ~/.ssh/authorized_keys

|

||||

```

|

||||

* Check openssh-server status

|

||||

* ```

|

||||

```

|

||||

sudo service ssh status

|

||||

```

|

||||

* SSH into the remote machine

|

||||

* ```

|

||||

```

|

||||

ssh <username>@<remote_server_IP_or_hostname>

|

||||

```

|

||||

|

||||

@@ -468,11 +469,11 @@ To enable remote login on a MAC, [read this section](#enable-remote-login-on-mac

|

||||

### Replace a string by another string in a text file

|

||||

|

||||

* Replace one string by another (e.g. **old_string**, **new_string**)

|

||||

* ```

|

||||

```

|

||||

sed -i 's/old_string/new_string/g' <file_path>/<file_name>

|

||||

```

|

||||

* Use environment variables (double quotes)

|

||||

* ```

|

||||

```

|

||||

sed -i "s/old_string/$env_variable/g" <file_path>/<file_name>

|

||||

```

|

||||

|

||||

@@ -529,11 +530,11 @@ date

|

||||

You can use [Dig](https://man.archlinux.org/man/dig.1) to gather DNS information of a website

|

||||

|

||||

* Template

|

||||

* ```

|

||||

```

|

||||

dig <website.tld>

|

||||

```

|

||||

* Example

|

||||

* ```

|

||||

```

|

||||

dig threefold.io

|

||||

```

|

||||

|

||||

@@ -546,31 +547,31 @@ You can also use online tools such as [DNS Checker](https://dnschecker.org/).

|

||||

We present one of many ways to partition and mount a disk.

|

||||

|

||||

* Create partition with [gparted](https://gparted.org/)

|

||||

* ```

|

||||

```

|

||||

sudo gparted

|

||||

```

|

||||

* Find the disk you want to mount (e.g. **sdb**)

|

||||

* ```

|

||||

```

|

||||

sudo fdisk -l

|

||||

```

|

||||

* Create a directory to mount the disk to

|

||||

* ```

|

||||

```

|

||||

sudo mkdir /mnt/disk

|

||||

```

|

||||

* Open fstab

|

||||

* ```

|

||||

```

|

||||

sudo nano /etc/fstab

|

||||

```

|

||||

* Append the following to the fstab with the proper disk path (e.g. **/dev/sdb**) and mount point (e.g. **/mnt/disk**)

|

||||

* ```

|

||||

```

|

||||

/dev/sdb /mnt/disk ext4 defaults 0 0

|

||||

```

|

||||

* Mount the disk

|

||||

* ```

|

||||

```

|

||||

sudo mount /mnt/disk

|

||||

```

|

||||

* Add permissions (as needed)

|

||||

* ```

|

||||

```

|

||||

sudo chmod -R 0777 /mnt/disk

|

||||

```

|

||||

|

||||

@@ -583,36 +584,36 @@ We present one of many ways to partition and mount a disk.

|

||||

You can use [gocryptfs](https://github.com/rfjakob/gocryptfs) to encrypt files.

|

||||

|

||||

* Install gocryptfs

|

||||

* ```

|

||||

```

|

||||

apt install gocryptfs

|

||||

```

|

||||

* Create a vault directory (e.g. **vaultdir**) and a mount directory (e.g. **mountdir**)

|

||||

* ```

|

||||

```

|

||||

mkdir vaultdir mountdir

|

||||

```

|

||||

* Initiate the vault

|

||||

* ```

|

||||

```

|

||||

gocryptfs -init vaultdir

|

||||

```

|

||||

* Mount the mount directory with the vault

|

||||

* ```

|

||||

```

|

||||

gocryptfs vaultdir mountdir

|

||||

```

|

||||

* You can now create files in the folder. For example:

|

||||

* ```

|

||||

```

|

||||

touch mountdir/test.txt

|

||||

```

|

||||

* The new file **test.txt** is now encrypted in the vault

|

||||

* ```

|

||||

```

|

||||

ls vaultdir

|

||||